AI Chat Logs Seizable, Dutch Lawyers Disciplined, Pentagon Reviews AI Vendor, AI Law Uncertainty Grows – Issue #06

Legal exposure tied to AI chatbot conversations, sanctions over AI-generated legal documents, federal review of an AI vendor relationship, and ongoing uncertainty around AI ownership and data security regulation.

On-Prem AI Weekly – Issue #06

February 18, 2026

Welcome to the On-Prem AI Weekly Brief. This issue reflects how AI systems are increasingly intersecting with legal procedure, professional standards, federal oversight, and evolving regulatory frameworks.

Federal judge rules AI chatbot conversations can be seized as evidence

A federal court has determined that conversations with AI chatbots may be obtained as evidence in fraud investigations. The ruling effectively places certain AI interactions in the same category as other digital communications when assessing discoverable material. As AI tools become embedded in financial and operational workflows, the decision expands the scope of legal exposure tied to chatbot use.

Dutch lawyers disciplined over AI-generated legal documents

Two Dutch attorneys faced disciplinary action after submitting court documents that contained fabricated citations generated by AI systems. The case has drawn attention within legal circles to the verification responsibilities that remain with practitioners, even when generative tools are used to draft filings. Regulatory bodies have reiterated that professional standards apply regardless of drafting method.

Pentagon reviewing AI vendor relationship

The US Department of Defense is reviewing its relationship with AI company Anthropic. The review centers on how federal agencies engage with advanced AI vendors and how those relationships are structured. Increased scrutiny reflects broader institutional attention to governance and oversight as AI systems move into national-level operational environments.

AI law remains unclear as ownership and data security risks grow

Reporting from ITWeb highlights ongoing uncertainty about AI-related legal frameworks, particularly in areas such as model ownership, intellectual property, and data security. As organizations expand AI deployments, unresolved questions around liability, data handling, and regulatory compliance are creating additional governance challenges for enterprise and public sector institutions.

Who is On Prem?

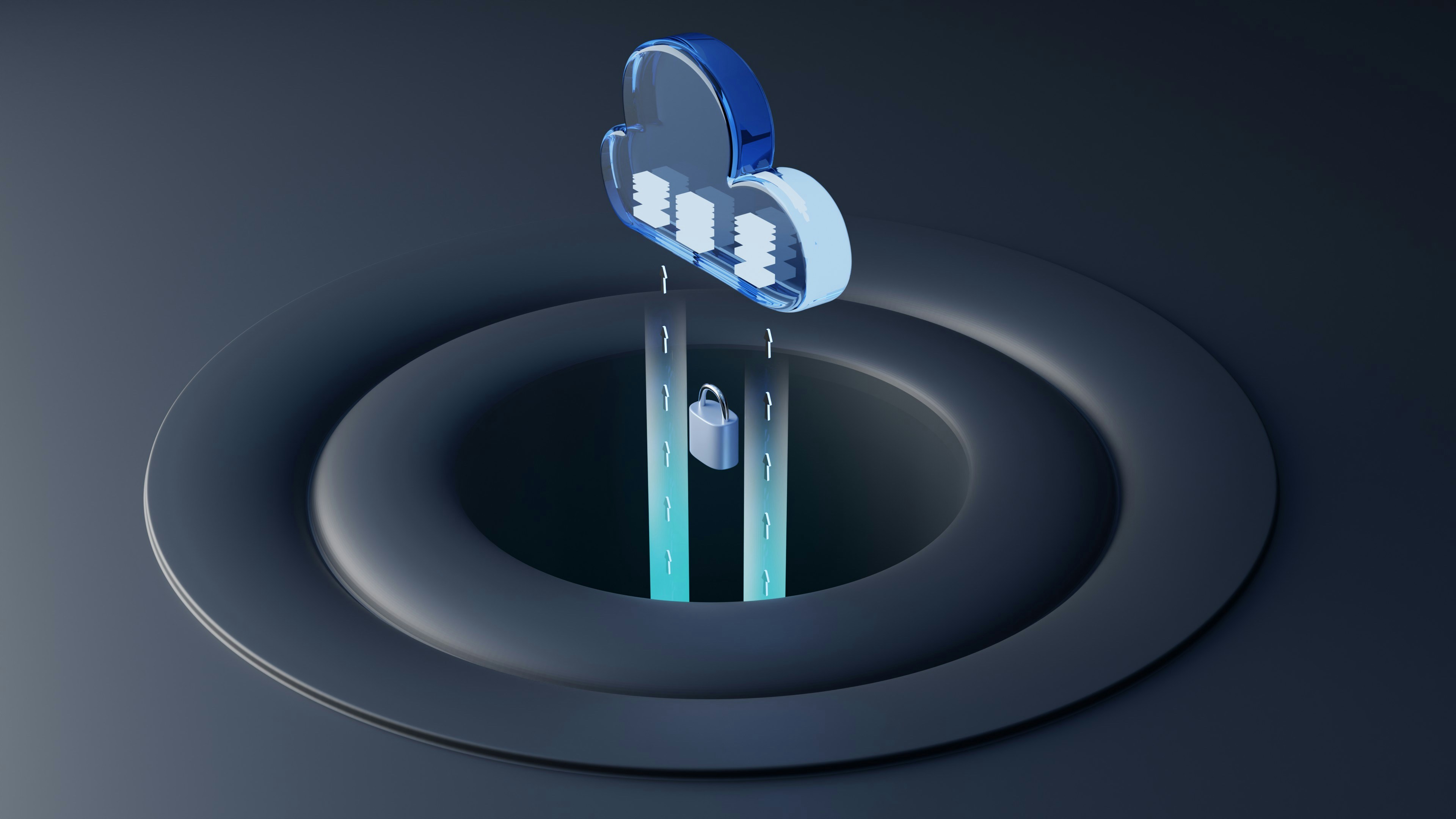

On Prem builds private AI computers that run large language models entirely inside your network. Designed for organizations that require control, auditability, and zero cloud dependency, On Prem enables teams to deploy and operate AI on their own hardware, under their own policies.

Our systems deliver AI inference as a governed, network-accessed resource. Every prompt and response remains private, identity-bound, and fully auditable, with no subscriptions and no external data exposure. Organizations own their models, their data, and their reasoning.

Learn more at onpremcomputer.com